In Part 1, I covered Stages 1 and 2 - how Bedrock gave us LLM access, and how the Converse API gave us tools. But even after all that, shipping an agent to production still took months. The weird part? It wasn't the AI that was slow. It was us. We were still writing orchestration code, session handlers, retry logic, infrastructure that had nothing to do with intelligence. The frameworks we were using to "help" us build agents started getting in our way.

Turns out, AWS was hitting the same wall internally. This moves us from the era of intelligence to the era of agency.

Stage 3: The Agency Revolution (Strands SDK)

Strands wasn't born as a product. It was an internal tool, which AWS teams were using to build Amazon Q Developer, AWS Glue, and VPC Reachability Analyzer (You can read more about this here). In May 2025, they open-sourced it. It has over 14 million downloads now.

This wasn't just a feature update; it was a philosophical shift. AWS realized that developers don't want to write JSON schemas, or architect complex context retrieval mechanisms, we want to write Python.

MCP was the buzzword around this time. The blocker of needing context to these models was reducing. Tool calls removed the need for huge prompt instructions, copy pasting documentation, creating action groups. Amazon knew they needed something before they fell behind again.

This is where Strands fits in. It lets you create functions (API calls, DB queries, etc) and expose them as tools for your model to use. Tools are just a fancy word for an API, but it sits at a layer over the "Application Layer", let's call it the "Logical Layer".

To define the tool, you write up a function under the @tool decorator, and your model loads all the tool specifications before the first prompt is even sent. You are essentially creating a model fully context-aware about its capabilities, knowing what tools to use and more importantly "when" to use them. The model judges when to invoke them based on your docstrings and the system prompt.

This is also where Strands has an edge over existing frameworks. LangChain and LangGraph let you define the entire DAG/workflow. Strands decides dynamically for you at model runtime. According to a study of 3,000+ Github Issues and Stack Overflow posts on AI agents, Tool-Use Coordination (configuring when and how agents invoke tools) accounts for 23% of developer questions. Strands offloads that decision to the model.

The "No Scaffolding" Approach

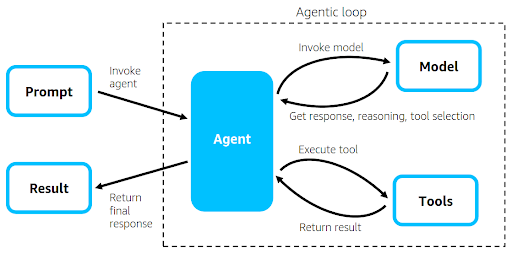

The beauty of Strands is that it strips away the orchestration boilerplate. It is purely the model and the tools. The loop is standard: Invoke Model -> Reasoning -> Tool Selection ->Execute Tool -> Result.

from strands import Agent, tool

import requests

@tool

def get_weather(city: str) -> str:

"""Get the current weather for a specific city"""

response = requests.get(f"https://api.weather.com/{city}")

return response.json()

\

#Loads tools into your agent \

agent = Agent(tools=[get_weather])

response = agent("Is it raining in London?")

What's happening here? The same tool definition that took 25 lines with the Converse API in the Part 1 Blog is now 8 lines. The @tool decorator infers the schema from your function signature and docstring. No JSON, no toolSpec, no inputSchema.

Unlike the Bedrock SDK, Strands is model agnostic. You aren't locked into Bedrock; you can swap the model ID for an external API key (like OpenAI or Anthropic direct) if you want to. The @tool decorator abstracts away the tool definition, and the SDK manages the reasoning loop, automatically retrying if the model hallucinates a parameter.

But there was still one last piece to the puzzle, how do we build this for scale? Agents need a lot of components, prompt requests must be authorised, sessions need to be isolated and conversation context must be retained.

Stage 4: The Runtime Layer (AgentCore)

This brings us to the newest player: AgentCore.

You’ve written your Strands agent. It works on your local system. Now, how do you build this for thousands of concurrent users? This is where AgentCore comes in, pulling agents out of MVP to Production.

AWS was never trying to own the agent framework. They're trying to own where your agent runs.

AWS launched Amazon Bedrock AgentCore because the existing cloud primitives—Lambda, Fargate, ECS, were never designed for the peculiar demands of AI agents: long-running, non-deterministic sessions that need per-user isolation, persistent memory, and sandboxed tool execution.

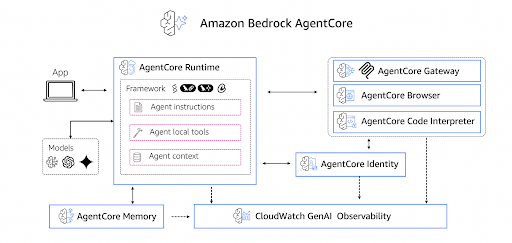

AgentCore Consists of Runtime, Memory, Gateway, Observability and Identity (Auth-Z and Auth-N). Covering all facets of building a chatbot for scale in one umbrella service.

Runtime: The MicroVM Isolation

When you deploy to AgentCore, every user session is spun up in a new MicroVM. Not a container. A Firecracker microVM with its own kernel, memory space, and network namespace.

- Session Level Isolation: User A's runtime is physically separated from User B's. There is zero risk of variable leakage or data crossover.

- Pinned Runtimes: The microVM is "pinned" to the session. Stays alive for up to 8 hours (one of the longest session windows of any managed agent runtime), then gets completely destroyed.

Agent workloads spend 30–70% of execution time in I/O wait, waiting for LLM responses, API calls, and tool results. AgentCore only charges for active CPU consumption, making I/O wait essentially free.

Observability:

When an agent fails, you need to know where. Was it the tool call? The model reasoning? A timeout? AgentCore gives you native OpenTelemetry tracing and CloudWatch integration, so you can see exactly which step broke and why. It generates spans that let you trace the exact path of execution taken by the model

Memory:

AgentCore Memory handles both short-term and long-term persistence. Short-term memory stores conversation history within a session and can be retrieved at any point. Long-term memory retains context across sessions: user preferences, semantic facts, summaries. You don't have to wire up DynamoDB or Redis yourself.

Identity:

This was the piece I didn't know I needed until I tried building auth myself. AgentCore Identity handles inbound and outbound authentication through a managed token vault.

When your agent makes a tool call, Identity passes the user's privileges to the model, ensuring all responses are scoped to what that user is actually allowed to access.

Gateway:

Gateway transforms existing REST APIs into MCP servers, these are exposed as tools and supports both OpenAPI specifications and Smithy models.

What sets AgentCore apart?

It's the deployment experience. You don't write a Dockerfile. Once you have your agent set up.

import boto3 \

import json

client = boto3.client('bedrock-agentcore')

response = client.invoke_agent_runtime(

agentRuntimeArn="arn:aws:bedrock-agentcore:us-west-2:1234:runtime/my-agent",

runtimeSessionId="user-session-abc123",

payload=json.dumps({"prompt": "What is the weather?"})

)

You run this using agentcore-starter-toolkit

agentcore configure --entrypoint my_agent.py

agentcore deploy

AgentCore then generates the Dockerfile from your requirements.txt, builds the image using AWS CodeBuild, pushes it to ECR, and deploys to a managed endpoint. You don't need Docker installed locally.

While the functionality is there, the developer experience still has rough edges. The documentation assumes you already know how the pieces fit, and when something breaks, the error messages don't always point you in the right direction.

So, How to Choose Your Stack?

We are in a transition phase where software is desperately trying to catch up with AI velocity. Here is my rule of thumb:

| Stage | Tool | When to Use |

| 1. Bedrock SDK | Direct model invocation | Quick prototypes \ Single model calls |

| 2. Converse API | Unified model interface | Multi-model support \ Basic tool use |

| 3. Bedrock Agents + Knowledge Bases | Managed agents | Simple linear tasks with fast context retrieval (FAQ bots, basic RAG) |

| 4. Strands SDK | Agent framework | Multi-tool orchestration. \ Dynamic multi-agent workflows. |

| 5. AgentCore | Production runtime | Taking agents to production. |

These aren't always sequential. Strands uses Converse API under the hood, and AgentCore can run any framework such as Strands, LangGraph, CrewAI. Bedrock Agents is a separate managed path for simpler use cases.

What's next?

AWS already announced an Agent Marketplace with 800+ pre-built agents. Expect managed agent evaluation, agent-to-agent orchestration - the abstraction layer keeps moving up.

The infrastructure is being commoditized. A year from now, deploying an agent will feel as mundane as deploying a Lambda. The hard part will be building one worth deploying.